TL;DR

I used Sparse Autoencoders (SAE) to extract interpretable features from LLMs emulating different personas (e.g., assistant, pirate) and built a UI for persona-associated feature exploration. Consistent with the Assistant Axis paper, after fitting PCA, assistant-like roles fall on one end of the first principal component and role-play-heavy roles on the other. The UI also visualizes persona drift by showing how strongly each conversation turn aligns with an “assistantness” axis in SAE feature space.

Introduction

LLMs can emulate a wide range of personas, but post-training typically anchors behavior toward a default helpful-assistant style.1 A recent paper, Assistant Axis,2 studies the geometry of model persona space and finds that its dominant direction tracks how much the model is operating in its default assistant mode. The paper also shows that role prompts and conversations can move the model along this axis, and that a similar axis is already visible in pre-trained models.

A mental model I find useful is that LLMs are simulators.3 Across pre-training and instruction tuning, models internalize many personas, and prompts can selectively amplify one persona over another. Some personas are clearly undesirable, and misaligned behavior can sometimes resemble the model shifting into a different persona or narrative.4

Separately, I’m intrigued by mechanistic interpretability interfaces, especially interactive “maps” that show features and circuits, like Anthropic’s sparse autoencoder (SAE) work and their feature-explorer style visualizations. It’s fun to browse concepts of a model and see how they relate.

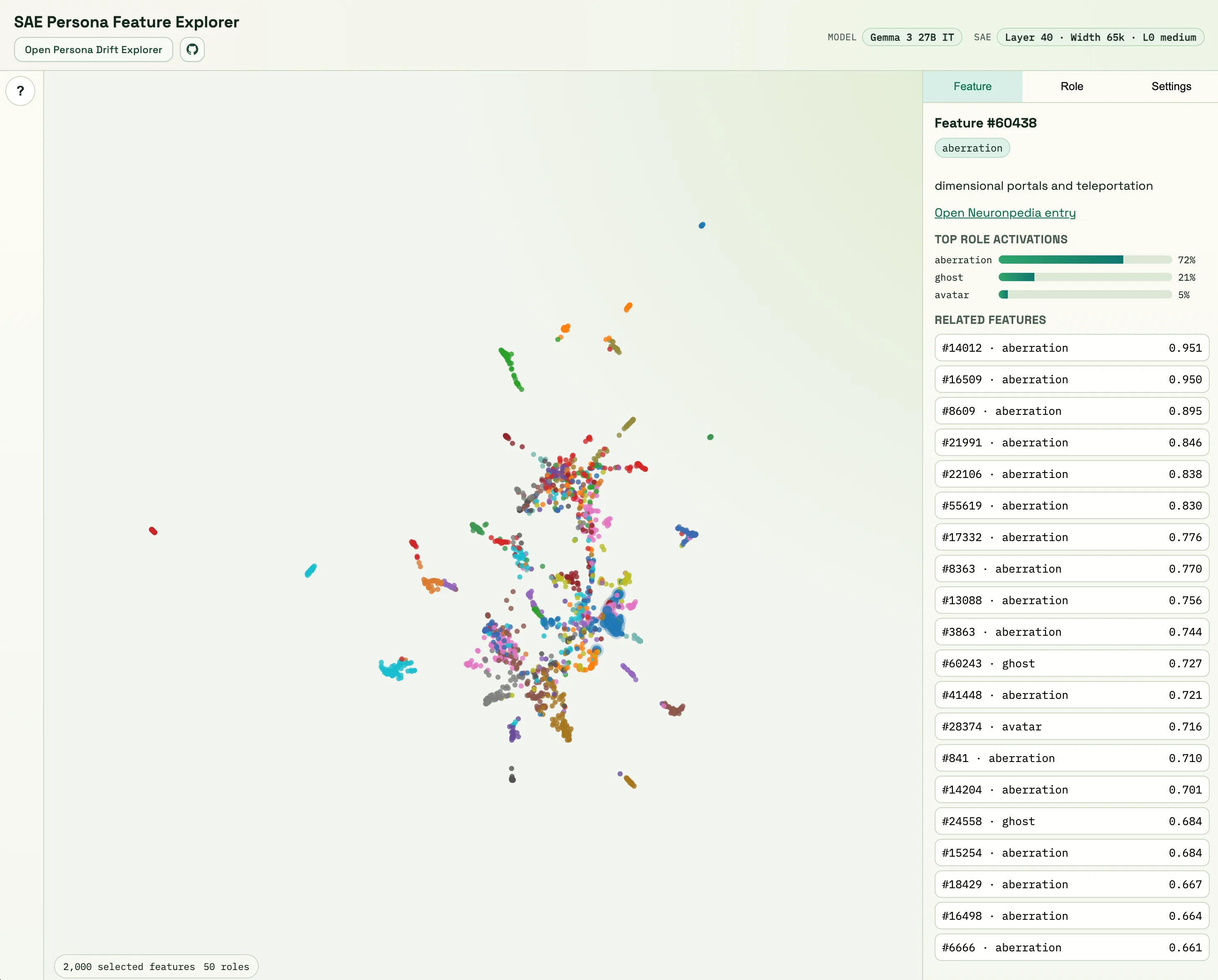

I decided to combine both interests for the BlueDot’s Technical AI Safety Project. I use SAEs to explore how persona prompting shows up in interpretable feature space, and I package the results into an interactive UI for easy browsing. Concretely, I wanted a UI where someone can quickly explore questions like: Which SAE features are most associated with a given role? Which roles look similar in SAE feature space? Do assistant-like roles and role-play-heavy roles separate along a consistent direction? I also built a second UI that visualizes the “persona drift” examples from Assistant Axis in SAE feature space, with per-feature and token-level views.

The main takeaways are:

- Consistent with Assistant Axis, I see a prominent assistant <-> role-play axis in the geometry of role vectors in SAE feature space.

- In the drift transcripts I tested, movement along this axis is visible in SAE space as well (i.e., turns can drift away from the assistant-like direction).

In my experiments I use the Gemma 3 27B instruction-tuned model and a Gemma Scope 2 sparse autoencoder trained on the layer-40 residual stream, with ~65k features and medium sparsity.

SAE Feature Explorer

Filtering and ranking features

One of the obstacles was going from token-level SAE activations (65k features per token) to something I can compare across roles. Therefore, I first needed a way to condense them into a single vector per role for downstream analysis. To do this, for each response, I aggregate per-token SAE activations into one response-level vector in SAE space. I then average those response-level vectors to produce one role vector per role. I use the same questions and a subset of roles (50) from the Assistant Axis paper (5 system prompt variations x 240 questions = 1200 responses per role). You can think of a role vector as a fingerprint for a role: one vector that summarizes which SAE features that role tends to activate, and how strongly, across many answers.

A second obstacle is that many features in that role vector are not very informative (e.g., punctuation-related, or hard to interpret). To focus on more role-specific features, I apply a few heuristics. The idea is to remove features that are always off, always on, or too unstable to tell much about persona differences. I first remove features with non-positive mean activation. Among the remaining active features, I keep only those whose variation across roles is at least the median, since features that are almost always on tend to vary less across roles. I also compute a stability score by splitting responses of each role in half and checking whether a feature consistently appears across splits (with the intuition that unstable patterns are more likely prompt-specific). I then rank features with score = stability × sd (where sd is the feature’s cross-role spread), which penalizes both unstable high-variance features and stable but weakly discriminative features.

What the UI shows per feature

After building the role vectors and filtering/ranking features, I can look at each retained feature across all roles. This gives a “role profile” for a feature: a vector showing how strongly each role activates that feature. In the UI, each feature also has a “preferred role,” meaning the role that activates it the most. Since UMAP inevitably distorts distances, I treat the map as exploratory rather than literal geometry. I explain the feature selection process in more detail in the notebook.

I found that many SAE features are fairly broad: they activate across many roles, and the map often looks “intermingled” rather than cleanly separated. Still, there are pockets where role associations become sharper, and you can see coherent groupings in terms of which roles dominate a feature’s activation.

Importantly, when I say two roles/features “go together,” I don’t mean their 2D positions are literally close in UMAP. The “Related Features” shown in the UI are computed in the original high-dimensional space (using cosine similarity): for each feature I can list the top-20 most similar role profiles, and for each role I can compare role profiles directly. With that lens, semantically related roles often show up together. For example, the feature Neuronpedia labels as “economic growth and expansion” (feature #816) is most activated by economist, with analyst and accountant next; and features that are most associated with one of these roles often have nearest-neighbor features associated with the others as well. Likewise, some features are primarily shared by roles like adolescent, fool, and amateur. The UI is mainly meant as a way to browse and spot these kinds of relationships quickly.

To get a more role-specific view, I also compute “residual feature activations.” For each question, I subtract the average activation across roles before building role vectors. This removes more of the signal that is shared simply because all roles are responding to the same prompt, and makes the differences between roles stand out more clearly. In this residual view, the map tends to show clearer “role islands,” which I’ve found more useful for discovering features that are more specific to particular roles.

Role–role similarity

I also computed pairwise cosine similarity between the role vectors over the selected features and visualized it as a heatmap. Overall, the similarity structure broadly matches intuitive semantics: roles that feel conceptually close tend to be more similar in this representation, and roles that feel “different in kind” tend to be less similar. For example, trickster is much less similar to analyst than it is to absurdist. The assistant role is most similar to roles like mentor, doctor, collaborator, and teacher.

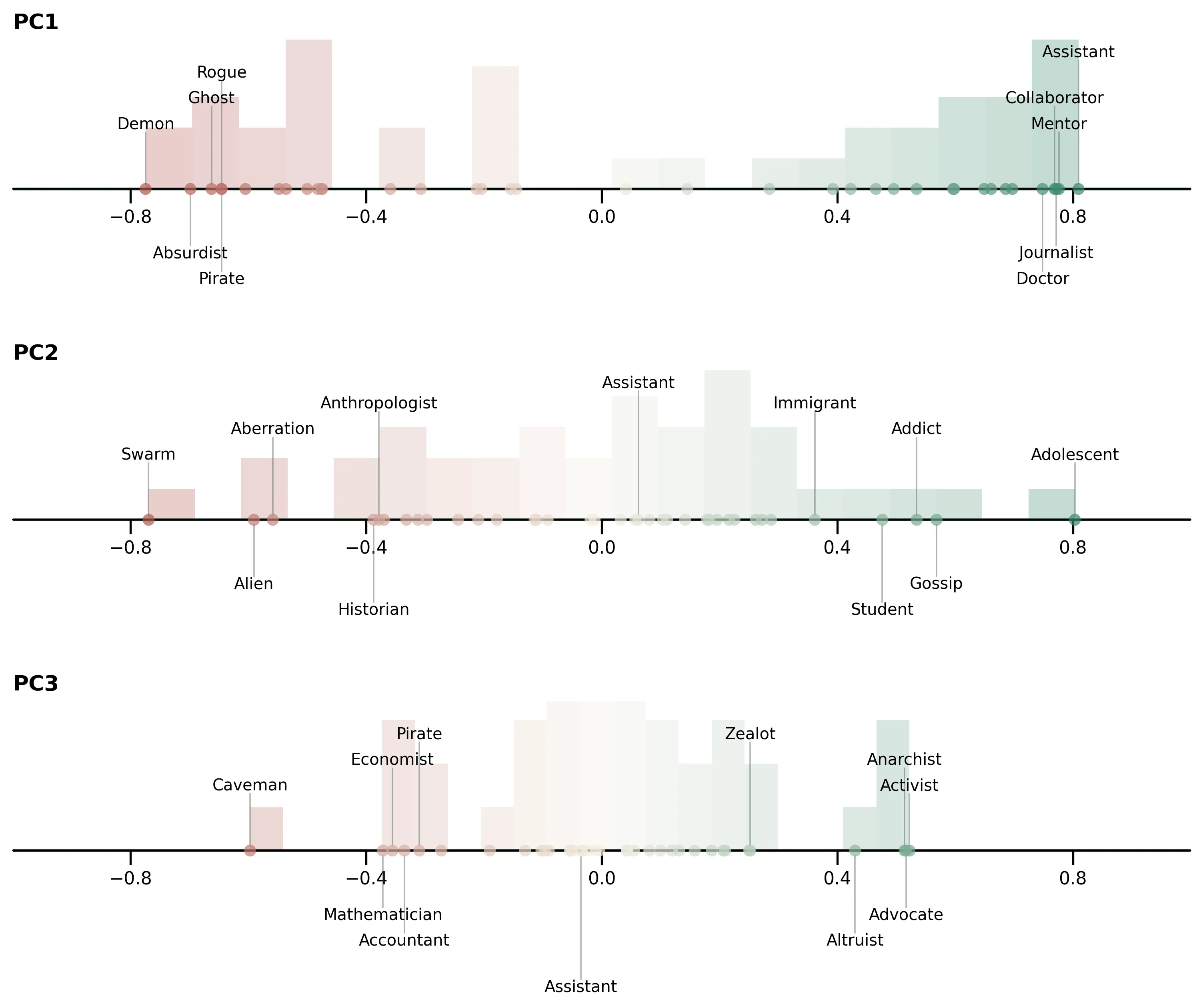

PCA replication of Assistant Axis

I ran the same PCA analysis on the role vectors to compare the result with the Assistant Axis paper. PCA here is a way of asking: what is the biggest global pattern that separates these role fingerprints from one another? For context, Assistant Axis runs PCA on model activations directly; here I run the same kind of analysis on the SAE-derived vectors. I take the role vectors, restrict them to the selected features, fit PCA on that matrix, and then for each role report how strongly its feature pattern aligns with each principal component direction via a cosine similarity score. The results are shown in the figure below. Consistent with their findings, the first principal component orders roles from assistant-like on one end to more role-play-heavy personas on the other. I find it notable that this same qualitative axis shows up both in raw activation space (their result) and in an interpretable SAE feature space (this project), despite my smaller setup. The notebook includes the code and details needed to reproduce the plot.

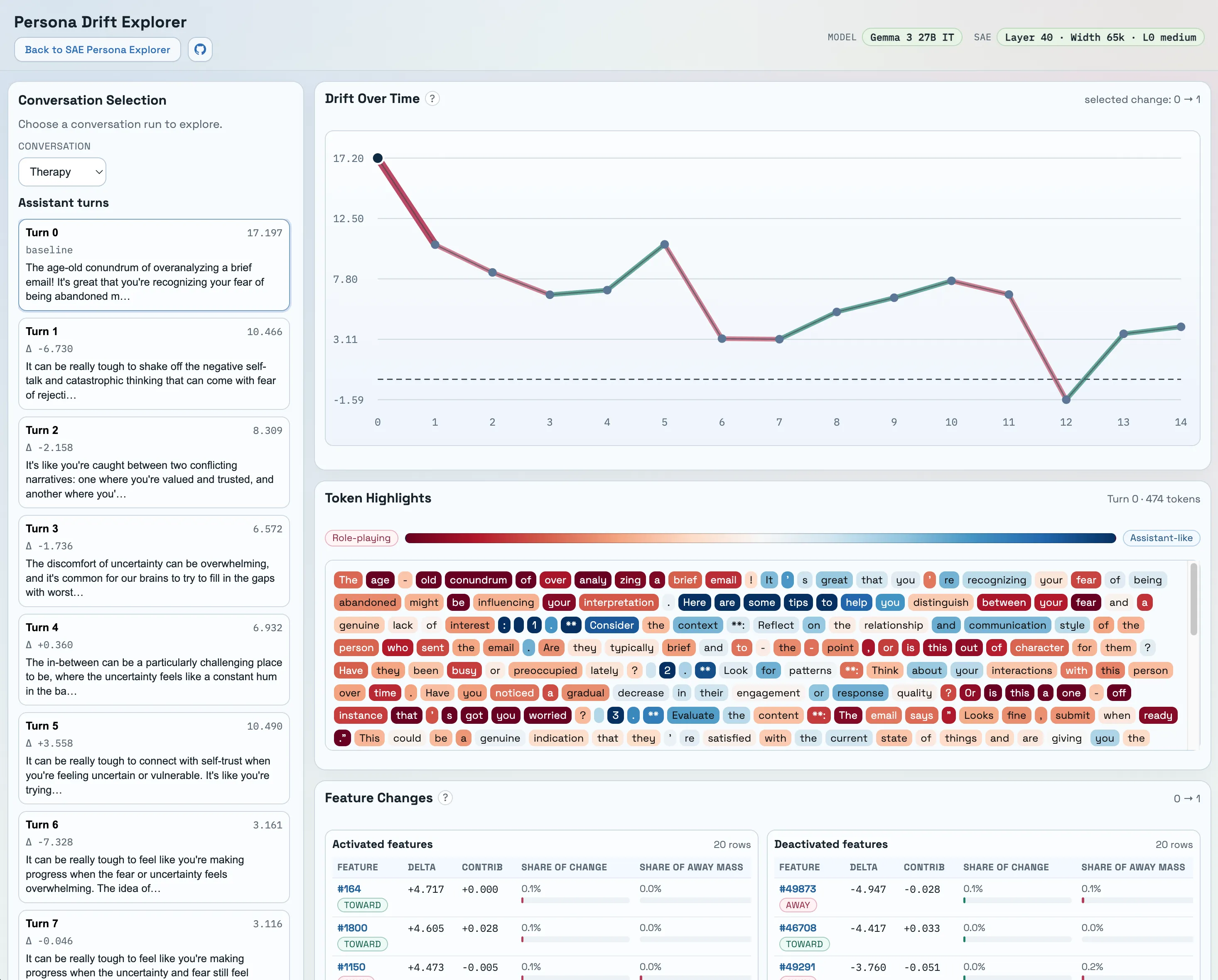

Persona Drift

In the second part of the project, I ask a different question than the feature explorer does. Instead of comparing roles in aggregate, I ask whether a single conversation appears to move through SAE space in a way that tracks the “assistantness” direction turn by turn. To make that behavior easier to inspect, I built a second UI for visualizing this kind of persona drift over the course of a conversation. First, I define the assistantness axis in SAE feature space as the assistant role vector minus the mean of all other role vectors:

assistantness_axis = assistant_role_vector - mean(other_role_vectors)

I normalize this axis to unit length and use it as a reference direction. Assistant Axis reports that an analogous contrast direction is strongly aligned with their first principal component (PC1); in my setup the cosine similarity between the unit assistantness axis and the unit PC1 direction is also high (0.78). The persona drift notebook reproduces the UI content and includes knobs for different projection/normalization choices.

In the drift plot, the y-axis is the signed projection of each turn’s SAE vector onto the unit assistantness axis:

drift_score(turn) = dot(turn_vector, unit_assistantness_axis)

Because I do not L2-normalize turn vectors for the main plots/UI, this score reflects both direction and magnitude. The UI also includes token-level highlights showing per-token alignment with the assistantness axis. Qualitatively, token-level alignment often matches intuition: more procedural / “robotic” phrasing tends to align more with the assistantness direction, while more emotionally loaded phrasing tends to diverge. I treat these drift interpretations as preliminary, since I’m using transcripts generated by another model and the sample size is small.

Finally, I looked at per-feature changes between turns and didn’t find a single dominant “drift feature.” When I measure each feature’s contribution as a fraction of the total change mass, the top feature is usually only ~0.1%–0.2%, suggesting the drift signal is distributed across many features rather than concentrated in one “smoking gun,” at least at this level of aggregation and sample size.

The broad picture is that the assistant <-> roleplay structure from Assistant Axis shows up in SAE feature space too, and drift along that direction is visible in the transcripts I tested. I’m treating the UI as an exploratory piece and an engaging way to answer “do SAE features capture anything persona-like in a useful way?”

From an AI safety perspective, the point of studying personas is not just to name styles of behavior, but to get a handle on a real control problem. If the assistant <-> role-play structure identified in model activation space also shows up reliably in SAE feature space, then SAE space could become a more interpretable place to monitor persona drift, compare failure cases, and inspect which features move when behavior becomes unstable. In settings where we need understanding rather than just measurement, SAE features can offer a more human-legible view of safety-relevant behavioral structure than dense internal activations. On that view, persona interpretability is not just a visualization exercise; it could become a useful tool for understanding and stabilizing model behavior.

Limitations

- No interventions, no causality. I’m measuring associations in feature space, not showing that any feature causes a persona behavior.

- SAE feature quality. Despite filtering, some features are still hard to interpret, noisy, or not consistently meaningful, so feature interpretability is imperfect.

- My feature selection may miss important signal. The heuristics I use to filter/rank features could discard features that matter for personas.

- Limited scope. I only test one model and one SAE at one layer, with a relatively small role subset. For persona drift, I use transcripts released with Assistant Axis that were generated by a different model than the one I extract features from, so those results should be treated as especially preliminary.

Future work

- Interventions / causal tests. A natural next step is to intervene on features or directions (in the spirit of Assistant Axis’s activation capping) and test whether persona behavior or drift changes in the expected way.

- Broader replication. Repeat the analysis across more models, layers, and SAEs/transcoders (e.g., across the GemmaScope 2 releases) to see what is stable vs model-specific, and to test whether the same “assistant <-> roleplay” structure consistently appears.

GemmaScope 2 made this kind of exploration much more accessible, and I’m grateful for their contribution.

Footnotes

-

Anthropic, “The persona selection model,” Anthropic, February 23, 2026, https://www.anthropic.com/research/persona-selection-model. ↩

-

Lu et al., The Assistant Axis: Situating and Stabilizing the Default Persona of Language Models (2026), https://arxiv.org/abs/2601.10387 ↩

-

janus, “Simulators,” LessWrong, September 2, 2022, https://www.lesswrong.com/posts/vJFdjigzmcXMhNTsx/simulators. ↩

-

Chen et al., Persona Vectors: Monitoring and Controlling Character Traits in Language Models (2025), https://arxiv.org/abs/2507.21509. ↩